By Study Finds

By Study Finds

An innovative “electronic tongue” that can potentially replicate human preferences for certain foods is in development. Researchers at Penn State University say this breakthrough comes as researchers aim to integrate emotional intelligence, which includes likes and dislikes influenced by taste, into artificial intelligence (AI).

AI has advanced significantly over the years. However, its current models largely disregard human psychology, including the nuances of emotional intelligence.

“The main focus of our work was how could we bring the emotional part of intelligence to AI,” says corresponding author Saptarshi Das, associate professor of engineering science and mechanics at Penn State, in a university release. “Emotion is a broad field and many researchers study psychology; however, for computer engineers, mathematical models and diverse data sets are essential for design purposes. Human behavior is easy to observe but difficult to measure and that makes it difficult to replicate in a robot and make it emotionally intelligent. There is no real way right now to do that.”

Eating habits serve as a prime example of this emotional intelligence. While hunger is the physiological drive to eat, our choices of what to eat are influenced by our sense of taste, a process known as gustation. As Das explains, even when one is not hungry, the psychological desire can drive them to consume a sweet treat over, say, a piece of meat.

“If you are someone fortunate to have all possible food choices, you will choose the foods you like most,” explains Das. “You are not going to choose something that is very bitter, but likely try for something sweeter, correct?”

Activist Post is Google-Free — We Need Your Support

Contribute Just $1 Per Month at Patreon or SubscribeStar

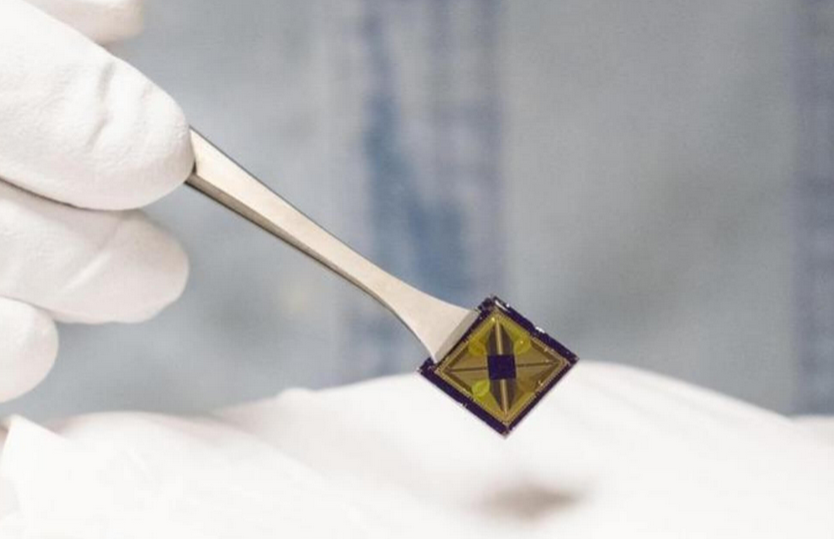

To grasp the intricacies of taste, Penn State researchers looked to the human tongue, which translates chemical data into electrical signals sent to the brain’s gustatory cortex. In this brain region, complex neuronal networks shape our taste perception. To mimic this, the team crafted an electronic “tongue” using chemitransistors made of graphene to detect chemical molecules, combined with memtransistors constructed from molybdenum disulfide. The resultant “electronic gustatory cortex” connects a “hunger neuron,” “appetite neuron,” and a “feeding circuit.”

“This means the device can ‘taste’ salt,” says study co-author Subir Ghosh, a doctoral student in engineering science and mechanics.

The team utilized two distinct 2D materials for their project.

“We used two separate materials because while graphene is an excellent chemical sensor, it is not great for circuitry and logic, which is needed to mimic the brain circuit,” says study co-author Andrew Pannone, a graduate research assistant in engineering science and mechanics. “For that reason, we used molybdenum disulfide, which is also a semiconductor. By combining these nanomaterials, we have taken the strengths from each of them to create the circuit that mimics the gustatory system.”

Subir Ghosh, doctoral student in engineering science and mechanics, left, and Andrew Pannone, graduate research assistant in engineering science and mechanics, at work in a lab in the Millennium Science Complex on Penn State’s University Park campus. (CREDIT: Das Research Lab/Penn State)

This technology isn’t limited to one taste. It can be adapted to recognize all primary taste profiles, from sweet and salty to umami. Looking ahead, the researchers see numerous applications, including AI-designed diets based on emotional preferences or tailored restaurant meal suggestions.

The team’s immediate goal is to expand the electronic tongue’s capacity.

“We are trying to make arrays of graphene devices to mimic the 10,000 or so taste receptors we have on our tongue that are each slightly different compared to the others, which enables us to distinguish between subtle differences in tastes,” says Das. “The example I think of is people who train their tongue and become a wine taster. Perhaps in the future we can have an AI system that you can train to be an even better wine taster.”

Further advancements will focus on integrating the electronic tongue and gustatory circuit into one chip. Beyond taste, the team envisions broadening this concept to incorporate other senses, leading to a more holistic and emotionally intelligent AI system.

“The circuits we have demonstrated were very simple, and we would like to increase the capacity of this system to explore other tastes,” explains Pannone. “But beyond that, we want to introduce other senses and that would require different modalities, and perhaps different materials and/or devices. These simple circuits could be more refined and made to replicate human behavior more closely. Also, as we better understand how our own brain works, that will enable us to make this technology even better.”

The study is published in the journal Nature Communications.

You might also be interested in:

- Robots with a sense of touch? Scientists create flexible e-skin for ‘soft machines’

- Smell of science: Researchers develop first-ever robot able to sniff odors

- Who would use a sex robot? Study identifies personality types more open to ‘artificial love’

Source: Study Finds

Become a Patron!

Or support us at SubscribeStar

Donate cryptocurrency HERE

Subscribe to Activist Post for truth, peace, and freedom news. Follow us on SoMee, Telegram, HIVE, Flote, Minds, MeWe, Twitter, Gab, and What Really Happened.

Provide, Protect and Profit from what’s coming! Get a free issue of Counter Markets today.

Be the first to comment on "Scientists Create An ‘Electronic Tongue’ To Give Artificial Intelligence Human Taste"