It is unfortunate that citizens and experts alike are finally waking up to the threat of artificial intelligence being used by law enforcement when the genie is already out of the bottle. It is especially troubling to see the high-level institutions that have been behind the creation of these systems beginning to warn of potential miscalculations due to rolling out artificial intelligence before it is properly understood. But, as they say, better late than never.

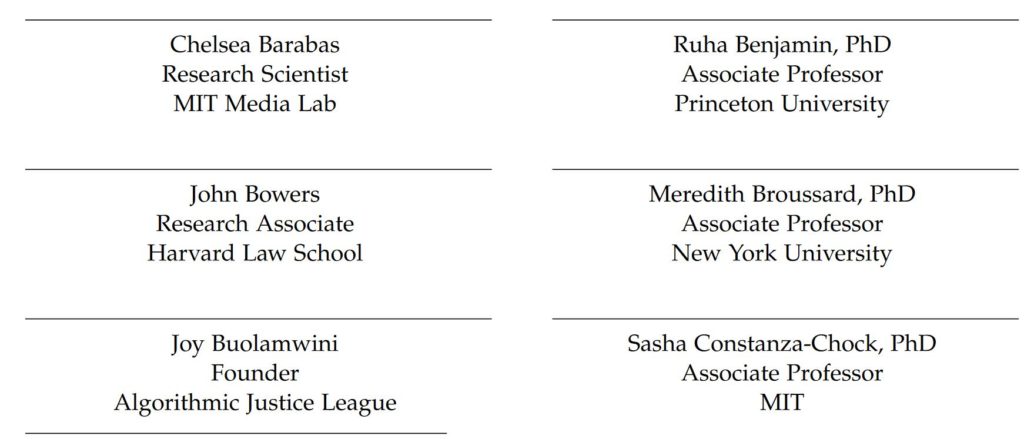

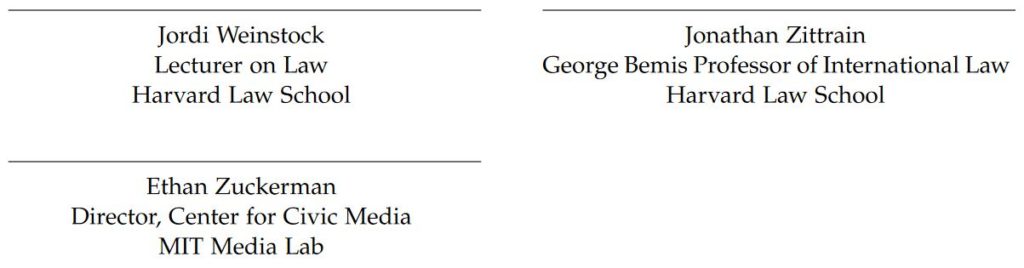

A new paper released by MIT entitled “Technical Flaws of Pretrial Risk Assessments Raise Grave Concerns” has been signed by some of the highest level university experts in the field of A.I. and law.

Despite this very esteemed panel of mainstream contributors, their concerns about the current state of artificial intelligence in law enforcement and the justice system align with those which readers of alternative media already would be quite familiar.

The document is naturally filled with a bit of technical jargon and euphemistic language, but it’s still fairly concise at around 4 pages. Their main concerns appear in the Summary. My emphasis added:

Actuarial pretrial risk assessments suffer from serious technical flaws that undermine their accuracy, validity,and effectiveness. They do not accurately measure the risks that judges are required by law to consider. When predicting flight and danger, many tools use inexact and overly broad definitions of those risks. When predicting violence, no tool available today can adequately distinguish one person’s risk of violence from another. Misleading risk labels hide the uncertainty of these high-stakes predictions and can lead judges to overestimate the risk and prevalence of pretrial violence. To generate predictions, risk assessments rely on deeply flawed data, such as historical records of arrests, charges, convictions, and sentences. This data is neither a reliable nor a neutral measure of underlying criminal activity. Decades of research have shown that, for the same conduct, African-American and Latinx people are more likely to be arrested, prosecuted, convicted and sentenced to harsher punishments than their white counterparts. Risk assessments that incorporate this distorted data will produce distorted results. These problems cannot be resolved with technical fixes. We strongly recommend turning to other reforms.

That is about as comprehensive a condemnation as one could hope to find. And, yet, we already exist in a world where smart home gadgets are snitching on their owners by calling police. Police departments have experimented with secret pre-crime A.I. systems that have put innocent people on watchlists. Facial recognition is widely being used in the government and private sector to determine criminal threats as well as become integrated into financial systems. And the military now feels comfortable to roll out new facial recognition systems for its soldiers to be connected to weapons and target people identified by A.I. as potential threats or even confirmed enemies.

I would encourage people to become familiar with the technical aspects of these systems described within the above paper that are now admittedly “no better than a crystal ball” and yet threaten the core American concept of being innocent until proven guilty, as well as putting anyone in the crosshairs of a military scope if these systems are not reined in immediately.

Fortunately, the pushback has already started in several cities, and a few police departments have dropped their programs after becoming aware of the inaccuracies. Let’s work to keep the momentum moving in the right direction.

Nicholas West writes for Activist Post. Support us at Patreon for as little as $1 per month. Follow us on Minds, Steemit, SoMee, BitChute, Facebook and Twitter.

Subscribe to Activist Post for truth, peace, and freedom news. Follow us on Minds, Twitter, Steemit, and SoMee.

Provide, Protect and Profit from what’s coming! Get a free issue of Counter Markets today.

H/T MailOnline

Be the first to comment on "Top University Artificial Intelligence Experts Warn of “Technical Flaws” In Pre-Crime Police Systems"