Facebook is gearing up for the upcoming midterm elections by building a physical “war room” in order to fight off “bad actors” who wish to influence voters.

Facebook’s head of civic engagement, Samidh Chakrabarti, told NBC in an interview published Tuesday that the company was in a better place than it was in 2016 in regards to fighting “misinformation” and “fake news.”

“I think we are in a much better place than we were in 2016. But it is an arms race. And so that’s why we’re remaining ever vigilant, laser-focused to make sure that we can stay ahead of new problems that emerge,” Chakrabarti said.

According to the top executive, the social giant has become effective over the past two years in “combating foreign interference” and blocking and deleting unwanted “fake accounts.”

Facebook is taking its “responsibility” (as Mark Zuckerberg put it) to battle these potential bad actors so seriously that it will have in place a physical “war room” wherein real-time the company will hope to guarantee fair elections.

The command center like space will be composed of people of different trades who will be able to “take quick and decisive action” if needed.

When asked what some of the tactics and strategies that his company was using to detect malicious activity, Chakrabarti admitted that Facebook is “just one small part of a much bigger puzzle” that includes governments and “security experts” around the world.

“We’ve been working with governments around the world, with security experts around the world, with civic society around the world to share information about threats that we see. And we bring those together and we put our best intelligence investigators on it to find that kind of activity on our platform and take it down,” the Facebook head would say.

The interview provides no elaboration on what security experts Facebook has been consulting, a lack of detail which caused RT to mention:

… its partnership with the Atlantic Council is a good indication of precisely how ‘unbiased’ one can expect them to be. The Council is basically an academic arm of NATO which frequently hosts lively debates between assorted Russophobes. It also has a dedicated team of couch investigators who are skilled in detecting so-called “Russian bots” among social media users based on imperfections in their English.

NBC described Facebook’s goal in fighting misinformation as a way to “prevent another 2016,” referring to company claims that 126 million American’s received Russian-backed content on its platform during the run-up to Trump’s election victory.

Chakrabarti also confirmed close cooperation between Facebook and other tech media giants, revealing that the recent social media ban wave was a result of “exchanging information.”

As an example, with the takedowns that we did just a few weeks ago, we’ve been working with our industry partners on this, exchanging information. And that has really yielded a lot of benefits. The benefit that we see is we are able to get more information about particular bad actors and then we’re able to take them off of the platform. And we can similarly, reciprocally, provide that kind of help to others in the industry.

When asked directly if Facebook discriminates against conservatives, the head of civic engagement denied any bias, saying that the platform is “agnostic of people’s political views.”

As a report revealed in the New York Times last week, dozens of Facebook employees have organized against what they call the company’s “intolerant” liberal culture.

Joseph Jankowski is a contributor to PlanetFreeWill.com, where this article first appeared. His works have been published by globally recognizable news sites like Infowars.com, ZeroHedge.com, GlobalResearch.ca, and ActivistPost.com.

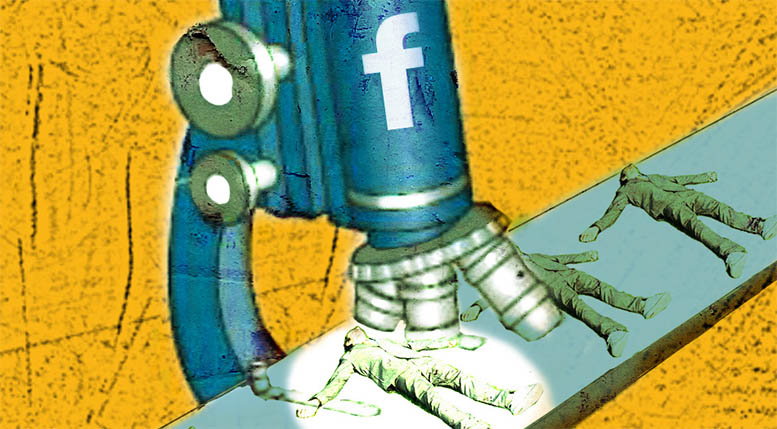

Image credit: Anthony Freda Art

In other words,l real time censorship to make sure as few Republicans win as possible. If they are using FB to communicate when it really counts they will suddenly have “technical difficulties”.

OH PLEASE ….. Facebook is building a war room to stop any and all support of conservative candidates and ideas.

“(P)revent another 2016.” So, exactly what was wrong with the 2016 election? Trump supporters had enough of Hitlery and her bunch telling people what to do. Her corruption and lousy running campaign is because she lost. She bought the most Russian bots to push her campaign.

So, we can’t have another surprise winner in the next election. By the way – if the Left takes over in 2018, it will be our last free election. They have sworn to make it so that they will never, EVER, lose power again – no matter what.

Besides, who left Facebook, Twitter, and Google to monitor our elections and determine what speech gets censored? It sounds – from the hearings in the Senate – like the Senate gave that task to the Social Media Bums. Democracy in Action!