By B.N. Frank

By B.N. Frank

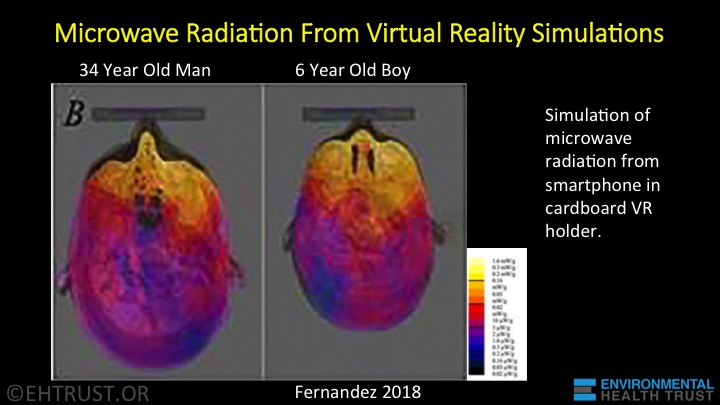

Warnings about virtual reality (VR) use are NOT new and it’s not just because users are often exposed to unwanted and sometimes unlawful content and behavior. Research has also indicated that VR use can cause behavioral changes, balance problems (see 1, 2), cognitive problems, eye problems (soreness, vision changes), headaches and other discomforts, skin issues, as well as other short-term and/or long-term health issues. In fact, children absorb 2-5 times more harmful radiation than adults while using VR systems. Additionally, research continues to warn that exposure to blue light from screens and LED bulbs is also biologically harmful (see 1, 2, 3, 4, 5, 6, 7, 8, 9, 10, 11, 12, 13). Nevertheless, VR, AR (augmented reality), and mixed reality (MR) systems are increasingly being promoted for use by people of all ages and for a variety of purposes. Of course, those promoting its use are often interested in collecting users’ personal data. D’uh!

From Wired:

Meta’s VR Headset Harvests Personal Data Right Off Your Face

Cameras inside the device that track eye and face movements can make an avatar’s expressions more realistic, but they raise new privacy questions.

In November 2021, Facebook announced it would delete face recognition data extracted from images of more than 1 billion people and stop offering to automatically tag people in photos and videos. Luke Stark, an assistant professor at Western University, in Canada, told WIRED at the time that he considered the policy change a PR tactic because the company’s VR push would likely lead to the expanded collection of physiological data and raise new privacy concerns.

This week, Stark’s prediction proved right. Meta, as the company that built Facebook is now called, introduced its latest VR headset, the Quest Pro. The new model adds a set of five inward-facing cameras that watch a person’s face to track eye movements and facial expressions, allowing an avatar to reflect their expressions, smiling, winking, or raising an eyebrow in real time. The headset also has five exterior cameras that will in the future help give avatars legs that copy a person’s movements in the real world.

After Meta’s presentation, Stark said the outcome was predictable. And he suspects that the default “off” setting for face tracking won’t last long. “It’s been clear for some years that animated avatars are acting as privacy loss leaders,” he said. “This data is far more granular and far more personal than an image of a face in the photograph.”

At the event announcing the new headset, Mark Zuckerberg, Meta’s CEO, described the intimate new data collection as a necessary part of his vision for virtual reality. “When we communicate, all our nonverbal expressions and gestures are often even more important than what we say, and the way we connect virtually needs to reflect that too,” he said.

Zuckerberg also said the Quest Pro’s internal cameras, combined with cameras in its controllers, would power photorealistic avatars that look more like a real person and less like a cartoon. No timeline was offered for that feature’s release. A VR selfie of Zuckerberg’s cartoonish avatar that he later admitted was “basic” became a meme this summer, prompting Meta to announce changes to its avatars.

Companies including Amazon and various research projects have previously used conventional photos of faces to try to predict a person’s emotional state, despite a lack of evidence that the technology can work. Data from Meta’s new headset might provide a fresh way to infer a person’s interests or reactions to content. The company is experimenting with shopping in virtual reality and has filed patents envisioning personalized ads in the metaverse, as well as media content that adapts in response to a person’s facial expressions.

In a briefing with journalists last week, Meta product manager Nick Ontiveros said the company doesn’t use that information to predict emotions. Raw images and pictures used to power these features are stored on the headset, processed locally on the device, and deleted after processing, Meta says. Eye-tracking and facial-expression privacy notices the company published this week state that although raw images get deleted, insights gleaned from those images may be processed and stored on Meta servers.

That data on a Quest Pro user’s face and eye movements can also be broadcast to companies beyond Meta. A new Movement SDK will grant developers outside the company access to abstracted gaze and facial expression data to animate avatars and characters. Meta’s privacy policy for its headsets says that data shared with outside services “will be subject to their own terms and privacy policies.”

Technology that captures expressions is already at work in photo apps and iPhone memoji, but Meta said this week that real-time body language capture is key to the company’s ambition to have people wear virtual reality headsets to join meetings or do their jobs. Meta announced it will soon integrate Microsoft productivity software, including Teams and Microsoft 365, into its virtual reality platform. Autodesk and Adobe are working on VR apps for designers and engineers, and an integration with Zoom will soon allow people to arrive at video meetings as their Meta avatar.

As for Meta’s Portal device for home video calls, the success of the Quest Pro may depend in part on whether people will buy hardware with novel data-collecting capabilities from a company with a record of failing to protect user data or to monitor the activity of third-party developers with access to its platform, like Cambridge Analytica. That may add to the existing challenges of selling Mark Zuckerberg’s vision for the metaverse. Meta has reported no more than 300,000 monthly active users for its flagship social VR platform, Horizon Worlds. The New York Times recently reported that even Meta employees working on the project used Horizon World’s very little.

Avi Bar-Zeev, a consultant on virtual and augmented reality who helped create Microsoft’s HoloLens headset, gives Meta credit for deleting images from interior cameras on its headset. He also wrote in 2020 that the device’s predecessor, the Quest 2, had “serious privacy issues,” and he says he feels the same way about the Quest Pro.

Bar-Zeev worries that face and eye movement data could allow Meta or other companies to emotionally exploit people in VR by watching how they respond to content or experiences. “My concern is not that we’re going to be served a bunch of ads that we hate,” he says. “My concern is that they’re going to know so much about us that they’re going to serve us a bunch of ads that we love, and we’re not even going to know that they’re ads.”

Kavya Pearlman, founder of the XR Safety Initiative, a nonprofit advising businesses and government regulators on safety and ethics in the metaverse, also has concerns. A former security director at Linden Labs and creator of Second Life, she has advised Facebook on security but says Meta’s previous scandals make her distrustful of the company.

Pearlman received a demo of the Quest Pro before its launch and found that the screens prompting users to activate face and eye tracking had “dark patterns” apparently designed to nudge people to adopt the technology. The US Federal Trade Commission released a report last month advising companies to not use designs that subvert privacy options.

“My background suggests that we are on a very dangerous path, and if we aren’t careful our autonomy, free will, and agency are at risk,” says Pearlman. She says companies working on VR should publicly discuss what data they collect and share and should set tight limits on the inferences they will ever make about people.

Activist Post is Google-Free

Support for just $1 per month at Patreon or SubscribeStar

Activist Post reports regularly about AR, MR, VR, and other unsafe technology. For more information, visit our archives and the following websites:

- Environmental Health Trust

- Electromagnetic Radiation Safety

- Physicians for Safe Technology

- Wireless Information Network

Become a Patron!

Or support us at SubscribeStar

Donate cryptocurrency HERE

Subscribe to Activist Post for truth, peace, and freedom news. Follow us on SoMee, Telegram, HIVE, Flote, Minds, MeWe, Twitter, Gab, What Really Happened and GETTR.

Provide, Protect and Profit from what’s coming! Get a free issue of Counter Markets today.

Be the first to comment on "VR Headset’s Inward-Facing Cameras “could allow Meta or other companies to emotionally exploit people”"