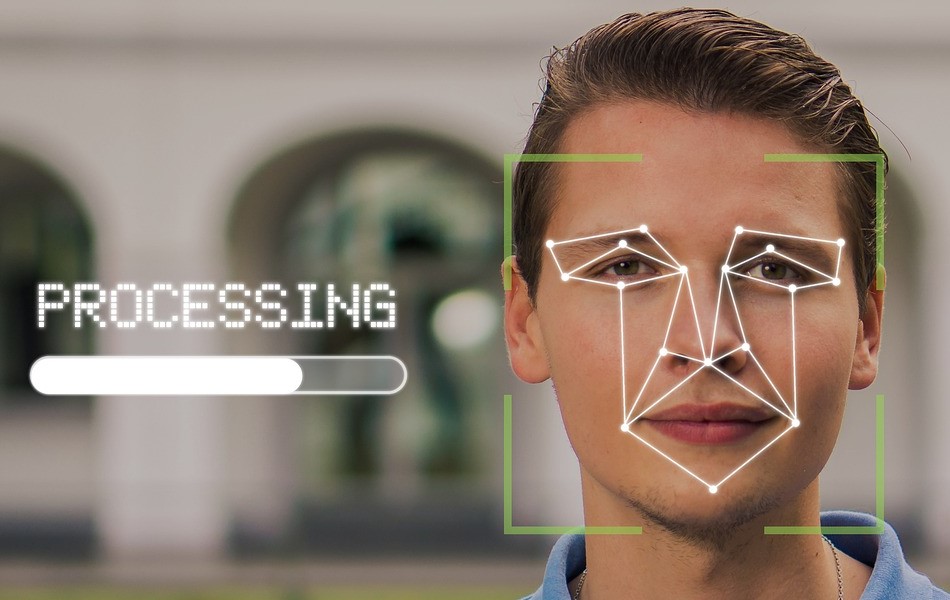

You’re probably aware that over the past several months, Facebook has come under heavy scrutiny for a variety of unethical business practices (see 1, 2, 3, 4, 5, 6, 7, 8). The good news is that even though other companies are still offering facial recognition apps and software to customers (see 1, 2), Facebook has decided to stop.

From Ars Technica:

Facebook to stop using facial recognition, delete data on over 1 billion people

Regulatory pressure and massive lawsuits may have forced its hand.

Facebook introduced facial recognition in 2010, allowing users to automatically tag people in photos. The feature was intended to ease photo sharing by eliminating a tedious task for users. But over the years, facial recognition became a headache for the company itself—it drew regulatory scrutiny along with lawsuits and fines that have cost the company hundreds of millions of dollars.

Today, Facebook (which recently renamed itself Meta) announced that it would be shutting down its facial recognition system and deleting the facial recognition templates of more than 1 billion people.

Further Reading

Facebook will pay more than $300 each to 1.6M Illinois users in settlement

The change, while significant, doesn’t mean that Facebook is forswearing the technology entirely. “Looking ahead, we still see facial recognition technology as a powerful tool, for example, for people needing to verify their identity, or to prevent fraud and impersonation,” said Jérôme Pesenti, Facebook/Meta’s vice president of artificial intelligence. “We believe facial recognition can help for products like these with privacy, transparency and control in place, so you decide if and how your face is used. We will continue working on these technologies and engaging outside experts.”

In addition to automated tagging, Facebook’s facial recognition feature allowed users to be notified if someone uploaded a photo of them. It also added a user’s name automatically to an image’s alt text, which describes the content of the image for users who are blind or otherwise visually impaired. When the system finally shuts down, notifications and the inclusion of names in automatic alt text will no longer be available.

Controversial technology

As facial recognition has grown more sophisticated, it has become more controversial. Because many facial recognition algorithms were initially trained on mostly white, mostly male faces, they have much higher error rates for people who are not white males. Among other problems, facial recognition algorithms were initially trained on mostly white, mostly male faces, they have wrongfully arrested in the US.

In China, the technology has been used to pick people out from crowds based on their age, sex, and ethnicity. According to reporting by The Washington Post, it’s been used to sound a “Uighur alarm” that alerts police to the presence of people from the mostly Muslim minority, who have been systematically detained for years. As a result, the US Department of Commerce sanctioned eight Chinese companies for “human rights violations and abuses in the implementation of China’s campaign of repression, mass arbitrary detention, and high-technology surveillance.”

Further Reading

Facebook “is tearing our societies apart,” whistleblower says in interview

Image: Pixabay

While Facebook never sold its facial recognition technology to other companies, that didn’t shield the social media giant from scrutiny. Its initial rollout of the technology was opt-out, which prompted Germany and other European countries to push Facebook to disable the feature in the EU.

In the US, some states have passed stringent laws restricting the use of biometrics. Illinois has perhaps the strictest, and in 2015, several residents sued Facebook claiming the “tag suggestions” feature violated the law. Facebook settled the class-action lawsuit earlier this year for $650 million, paying millions of users in the state $340 each.

Today’s announcement comes as Facebook/Meta has come under increasing scrutiny from lawmakers, regulators, and the broader public. The company has been faulted for its role in spreading misinformation in recent elections in the US, helping to foment ethnic violence in Myanmar, and failing to combat disinformation about climate change.

More recently, revelations from documents gathered by whistleblower Frances Haugen have shown that the company was aware that its products harm teens’ mental health, that its algorithms were driving polarization, and that its platform was undermining the company’s efforts to get people vaccinated against COVID.

Activist Post reports regularly about Facebook and unsafe technology. For more information, visit our archives.

Also Read from Activist Post:

The Big Tech Exodus Has Begun — Join Us! (Updated)

Become a Patron!

Or support us at SubscribeStar

Donate cryptocurrency HERE

Subscribe to Activist Post for truth, peace, and freedom news. Follow us on Telegram, HIVE, Flote, Minds, MeWe, Twitter, Gab and What Really Happened.

Provide, Protect and Profit from what’s coming! Get a free issue of Counter Markets today.

Be the first to comment on "Facebook Will Eliminate Facial Recognition System and Delete Data on Over 1 Billion People"